2016-03-07: Archives Unleashed Web Archive Hackathon Trip Report (#hackarchives)

|

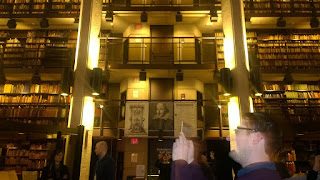

| The Thomas Fisher Rare Book Library (University of Toronto) |

Between March 3 - March 5, 2016, Librarians, Archivists, Historians, Computer Scientists, etc., came together for the Archives Unleashed Web Archive Hackathon at the University of Toronto Robarts Library, Toronto, Ontario Canada. This event gave researchers the opportunity to collaboratively develop open-source tools for web archives. The event was organized by Ian Milligan, (assistant professor of Canadian and digital history in the Department of History at the University of Waterloo), Nathalie Casemajor (assistant professor in communication studies in the Department of Social Sciences at the University of Québec in Outaouais (Canada)), Jimmy Lin (the David R. Cheriton Chair in the David R. Cheriton School of Computer Science at the University of Waterloo), Matthew Weber (Assistant Professor in the School of Communication and Information at Rutgers University), and Nicholas Worby (the Government Information & Statistics Librarian at the University of Toronto’s Robarts Library).

Additionally, the event was made possible due to the support of the Social Sciences and Humanities Research Council of Canada, the National Science Foundation, the University of Waterloo, the University of Toronto, Rutgers University, the University of Québec in Outaouais, the Internet Archive, Library and Archives Canada, and Compute Canada. Sawood Alam, Mat Kelly and myself, joined researchers from Europe and North America to exchange ideas in efforts to unleash our web archives. The event was split across three days.

DAY 1, THURSDAY MARCH 3, 2016

Ian Milligan kicked off the presentations by presenting the agenda. Following this, he presented his current research effort -

.@ianmilligan1 starts off #hackarchives with an outline of what will be accomplished at the Hackathon. #webarchives pic.twitter.com/GRscT5k2z5— Mat Kelly (@machawk1) March 3, 2016

HistoryCrawling with Warcbase (Ian Milligan, Jimmy Lin)

The presenters introduced Warcbase as a platform for exploring the past. Warcbase is an open-source tool used to manage web archives built on Hadoop an Hbase. Warcbase was introduced through two case studies and datasets, namely, exploring Canadian Political Parties and Political Interest Groups (2005 - 2015), and Geocities datasets.

Put Hacks to Work: Archives in Research (Matthew Weber)

Archive Research Services Workshop (Jefferson Bailey, Vinay Goel)

Following Matthew Weber's presentation, Jefferson Bailey and Vinay Goel presented a comprehensive introduction workshop for researchers, developers, and general users. The workshop addressed data mining and computational tools and methods for working with web archives.

Embedded Metadata as Mobile Micro Archives (Nathalie Casemajor)

Following Jefferson Bailey and Vinay Goel's presentation, Nathalie Casemajor presented her research effort for tracking the evolution of images shared on the web. She talked about how embedded metadata in images helped track dissemination of images shared on the web.

Revitalization of the Web Archiving Program at LAC (Tom Smyth)

Following Nathalie Casemajor's presentation, Tom Smyth of the Library and Archives Canada presented their archiving activities such as the domain crawls of Federal sites, curation of thematic research collections, and preservation archiving of resources at risk. He also talked about their recent collections such as Federal Election 2015, First World War Commemoration, and the Truth and Reconciliation collections.

After the first five short presentations, Jimmy Lin gave presented a technical tutorial of Warcbase. After which Helge Holzmann, presented ArchiveSpark: framework built to make accessing Web Archives easier for researchers, which makes for easy data extraction and derivation.

WordFish (Federico Nanni)

Federico Nanni presented WordFish: a R computer program used to extract political positions from text documents. Wordfish is a scaling technique and does not need any anchoring documents to perform the analysis but relies instead on a statistical model of word frequencies.

MemGator (Sawood Alam)

Following Federico Nanni's presentation Sawood Alam presented a tool he developed called MemGator: a Memento Aggregator CLI and Server written in Go. Memento is a framework that adds the time dimension to the web. Additionally, a timestamped copy of the presentation of a resource is also called a Memento. A list/collection of such mementos is called a TimeMap. MemGator can generate TimeMap of a given URI or provide the closest Memento to a given time.

Topic Words in Context (Jonathan Armoza)

Following Sawood Alam's presentation, Jonathan Armoza presented a tool he developed - TWIC (Topics Words in Context) by demonstrating LDA topic modeling of Emily Dickenson's poetry. TWIC provides a hierarchical visualization of LDA topic models generated by the MALLET topic modeler.

Following the brainstorming and group formation activity, all participants were received at the Bedford Academy for a reception that went on through the late evening.

DAY 2, THURSDAY MARCH 4, 2016

Mediacat (Alejandro Paz and Kim Pham)

Following Evan Light's presentation, Alejandro Paz and Kim Pham presented Mediacat: an open-source web crawler and archive application suite which enables ethnographic research to understand how digital news is disseminated and used across the web.

The presenters introduced Warcbase as a platform for exploring the past. Warcbase is an open-source tool used to manage web archives built on Hadoop an Hbase. Warcbase was introduced through two case studies and datasets, namely, exploring Canadian Political Parties and Political Interest Groups (2005 - 2015), and Geocities datasets.

— Nathalie Casemajor (@ncasemajor) March 3, 2016

— Neha Gupta (@archaeomap) March 3, 2016

.@ianmilligan1 closes on WARCbase usage: 1: Grab data 2/3: Filter/find sites of interest 4: Analyze text 5: Step 5: Profit! #hackarchives— Mat Kelly (@machawk1) March 3, 2016

Put Hacks to Work: Archives in Research (Matthew Weber)

Following Ian Milligan's presentation, Matthew Weber emphasized some important ideas to guide the development of tools for web archives, such as considering the audience.

My hackathon yacking is over - now Matthew Weber up on putting hacks to work. #hackarchives pic.twitter.com/jZijiLypTv— Ian Milligan (@ianmilligan1) March 3, 2016

Who is audience >> not just abt developing database backend, .@docmattweber #hackarchives— Neha Gupta (@archaeomap) March 3, 2016

.@docmattweber stresses the importance of questioning the reliability and validity of our web archive data sources #hackarchives— Jeremy Wiebe (@jeremyw) March 3, 2016

Archive Research Services Workshop (Jefferson Bailey, Vinay Goel)

Following Matthew Weber's presentation, Jefferson Bailey and Vinay Goel presented a comprehensive introduction workshop for researchers, developers, and general users. The workshop addressed data mining and computational tools and methods for working with web archives.

.@jefferson_bail & @vinaygo On his workshop, given WARC files, build derivatives files about contents https://t.co/i9rgWnmvYE #hackarchives— Mat Kelly (@machawk1) March 3, 2016

.@vinaygo : Once index created, build own visualizations on Kibana; #hackarchives— Neha Gupta (@archaeomap) March 3, 2016

Lots of data, derived formats, and APIs being presented by @jefferson_bail and @vinaygo. No shortage of things to play with at #hackarchives— Jeremy Wiebe (@jeremyw) March 3, 2016

.@jefferson_bail mentioned available @archiveitorg API for utilization during #hackarchives . Hoping to test this out instead of scraping— Mat Kelly (@machawk1) March 3, 2016

Embedded Metadata as Mobile Micro Archives (Nathalie Casemajor)

Following Jefferson Bailey and Vinay Goel's presentation, Nathalie Casemajor presented her research effort for tracking the evolution of images shared on the web. She talked about how embedded metadata in images helped track dissemination of images shared on the web.

.@ncasemajor on a constellation of derivative objects - how things are transformed, live/die. #hackarchives pic.twitter.com/2a7E5BkpHd— Ian Milligan (@ianmilligan1) March 3, 2016

@ncasemajor at #hackarchives: micro archives, metadata & disfunctionality in exploring the social life of #digital things— Katherine Cook (@KatherineRCook) March 3, 2016

Examine usage patterns, memology, visibility & copyright @ncasemajor > social life of #digital things, derivative objects #hackarchives— Neha Gupta (@archaeomap) March 3, 2016

Now @ncasemajor on 'yack'-ey questions like "when we publish an image on the web, where does it go?" Memes, vitality, usage. #hackarchives— Ian Milligan (@ianmilligan1) March 3, 2016

— Neha Gupta (@archaeomap) March 3, 2016

Revitalization of the Web Archiving Program at LAC (Tom Smyth)

Following Nathalie Casemajor's presentation, Tom Smyth of the Library and Archives Canada presented their archiving activities such as the domain crawls of Federal sites, curation of thematic research collections, and preservation archiving of resources at risk. He also talked about their recent collections such as Federal Election 2015, First World War Commemoration, and the Truth and Reconciliation collections.

After the first five short presentations, Jimmy Lin gave presented a technical tutorial of Warcbase. After which Helge Holzmann, presented ArchiveSpark: framework built to make accessing Web Archives easier for researchers, which makes for easy data extraction and derivation.

.@helgeho's ArchiveSpark @jcdl2016 project can easily extract and access data from #webarchives https://t.co/SVS7Ro9vWM #hackarchives— Mat Kelly (@machawk1) March 3, 2016

ArchiveSpark simplifies spark process, documents data lineage, transformations @helgeho #hackarchives— Neha Gupta (@archaeomap) March 3, 2016

— Neha Gupta (@archaeomap) March 3, 2016After a short break, there were five more presentations targeting Web Archiving and Textual Analysis Tools:

WordFish (Federico Nanni)

Federico Nanni presented WordFish: a R computer program used to extract political positions from text documents. Wordfish is a scaling technique and does not need any anchoring documents to perform the analysis but relies instead on a statistical model of word frequencies.

.@f_nanni's Wordfish tool uses R to extract political positions from text documents, ran on most recent debate on flight to #hackarchives— Mat Kelly (@machawk1) March 3, 2016

You can find out more about WordFish at https://t.co/FWxoxlHtfD. @jeremyw had some ideas about incorporating into warcbase… #HackArchives— Ian Milligan (@ianmilligan1) March 3, 2016

MemGator (Sawood Alam)

Following Federico Nanni's presentation Sawood Alam presented a tool he developed called MemGator: a Memento Aggregator CLI and Server written in Go. Memento is a framework that adds the time dimension to the web. Additionally, a timestamped copy of the presentation of a resource is also called a Memento. A list/collection of such mementos is called a TimeMap. MemGator can generate TimeMap of a given URI or provide the closest Memento to a given time.

.@ibnesayeed's #MemGator A #Memento Aggregator CLI and Server in Go https://t.co/8LS2U2w99t #hackarchives @WebSciDL— Mat Kelly (@machawk1) March 3, 2016

Try @ibnesayeed's #MemGator in your browser https://t.co/CFTqym7FiI Poke around. Try to break it! #hackarchives @WebSciDL— Mat Kelly (@machawk1) March 3, 2016

@ibnesayeed's MemGator can also be run as a @Docker container! https://t.co/yzdPeiDdFq #hackarchives @WebSciDL— Mat Kelly (@machawk1) March 3, 2016

Topic Words in Context (Jonathan Armoza)

Following Sawood Alam's presentation, Jonathan Armoza presented a tool he developed - TWIC (Topics Words in Context) by demonstrating LDA topic modeling of Emily Dickenson's poetry. TWIC provides a hierarchical visualization of LDA topic models generated by the MALLET topic modeler.

.@JonathaNgrams demoing topic modeling of Emily Dickenson's poetry w/ #d3js for data exploration https://t.co/7Ld7GjaLtU #hackarchives— Mat Kelly (@machawk1) March 3, 2016

Following Jonathan Armoza's presentation, Nick Ruest presented Twarc: a Python command line tool/Python library tool for archiving Tweet JSON data. Twarc runs in three modes: search, filter stream and hydrate.

Following Nick Ruest's presentation, I presented Carbon date: a tool originally developed by Hany SalahEldeen, which I current maintain. Carbon date is a tool for estimating the creation date of a website. Carbon date polls multiple sources for datetime evidence. It returns a Json response which contains the estimated creation date of the website.

After the five short presentation about Web Archiving and Textual Analysis Tools, all participants engaged in a brain storming session in which ideas where discussed. And clusters of researchers with common interests where iteratively developed. The brainstorming session led to the formation of seven groups, namely:How old is the website you are visiting? Check it out here: https://t.co/IfyTlMsFIq #hackarchives https://t.co/UHqP51Gm3x— Patrick Egan (@mrpatrickegan) March 3, 2016

- I know words and images

- Searching, mining, everything

- Interplanetary WayBack

- Surveillance of First Nations

- Nuage

- Graph‐X‐Graphics

- Tracking Discourse in Social Media

Teams are forming! #hackArchives pic.twitter.com/MLTtstmUfW— Ian Milligan (@ianmilligan1) March 3, 2016

The Twitter "twits" are forming. 😉 #hackArchives pic.twitter.com/T0R1sDwgye— Ian Milligan (@ianmilligan1) March 3, 2016

— Ian Milligan (@ianmilligan1) March 3, 2016

Following the brainstorming and group formation activity, all participants were received at the Bedford Academy for a reception that went on through the late evening.

Finishing up day one of #hackArchives with introductions - over a few libations. Thanks all for a great start! pic.twitter.com/t2FVpIaXUj— Ian Milligan (@ianmilligan1) March 4, 2016

DAY 2, THURSDAY MARCH 4, 2016

In the main room, the overpowering sound of clacking keyboards! #hackarchives pic.twitter.com/HmEewJwbby— Ian Milligan (@ianmilligan1) March 4, 2016

The second day of the Archives Unleashed Web Archive Hackathon began with breakfast, after which the groups formed on Day 1 met for about three hours to begin working on the ideas discussed the previous day. At noon, lunch was provided as more presentations took place:

Evan Light began the series of presentations, by talking about a box he created called the Snowden Archive-in-a-Box : The box features a stand-alone wifi network and web server that allows researchers to utilize the files leaked (subsequently published by the media) by Edward Snowden. The box which serves as a portable archive protects users from mass surveillance.

This is awesome! Snowden Archive-in-Box #hackarchives pic.twitter.com/4qOEphNPf9— nick ruest (@ruebot) March 4, 2016

Mediacat (Alejandro Paz and Kim Pham)

Following Evan Light's presentation, Alejandro Paz and Kim Pham presented Mediacat: an open-source web crawler and archive application suite which enables ethnographic research to understand how digital news is disseminated and used across the web.

Data Mining the Canadian Media Public Sphere (Sylvain Rocheleau)

Following Alejandro Paz and Kim Pham's presentation, Sylvain Rocheleau talked about his research efforts to provide near real time Data Mining of the Canadian news media. His research involves the mass crawl of about 700 Canadian news websites at 15-minute intervals, and Data Mining processes which includes Named Entity Recognition.

Following Alejandro Paz and Kim Pham's presentation, Sylvain Rocheleau talked about his research efforts to provide near real time Data Mining of the Canadian news media. His research involves the mass crawl of about 700 Canadian news websites at 15-minute intervals, and Data Mining processes which includes Named Entity Recognition.

Tweet Analysis with Warcbase (Jimmy Lin)

Following Sylvain Rocheleau's presentation, Jimmy Lin gave another tutorial in which he showed how to extract information from Tweets from the Warcbase platform.

Following Sylvain Rocheleau's presentation, Jimmy Lin gave another tutorial in which he showed how to extract information from Tweets from the Warcbase platform.

A five hour Hackathon session continued. The Hackathon was briefly suspended for a visit to the Thomas Fisher Rare Books Library.

After the visit to the Thomas Fisher Rare Books Library, the hackathon session continued until the evening, after which all participants went for Dinner at the University of Toronto Faculty Club.Ooohs and ahhhs as #hackArchives attendees get their photo op at the Fisher Rare Books Library. pic.twitter.com/sdIFT3h4t1— Ian Milligan (@ianmilligan1) March 4, 2016

DAY 3, THURSDAY MARCH 5, 2016

Go go gadget hackathon #hackarchives pic.twitter.com/QoaulPwKUA— KJ (@thundersnowjane) March 5, 2016

The third and final day of the Archives Unleashed Web Archive Hackathon began in a similar fashion as the second: first breakfast, second a three hour hackathon session, third presentations over lunch:

Malach Collection (Petra Galuscakova)

Petra Galuscakova started the series of presentations by talking about the Czech Malach Cross-lingual Speech Retrieval Test Collection: a collection of multimedia about the testimonies of survivors and other witnesses of the Holocaust.

Waku (Kyle Parry)

Digital Arts and Humanities Initiatives at UH Mānoa (or how to do interesting things with few resources) (Richard Rath)

Malach Collection (Petra Galuscakova)

Petra Galuscakova started the series of presentations by talking about the Czech Malach Cross-lingual Speech Retrieval Test Collection: a collection of multimedia about the testimonies of survivors and other witnesses of the Holocaust.

Waku (Kyle Parry)

Digital Arts and Humanities Initiatives at UH Mānoa (or how to do interesting things with few resources) (Richard Rath)

After the presentations, the hackathon session continued until 4:30 pm EST, thereafter, the group presentations began:

PRESENTATIONS

I know words and images (Kyle Parry, Niel Chah, Emily Maemura, and Kim Pham)

Inspired by John Oliver's #MakeDonaldDrumpfAgain, this team sought to research memes by processing words and images. They investigated what people say, how they use and modify the text and images of others, and how computers read text and classify images, etc.

Searching, mining, everything (Jaspreet Singh, Helge Holzmann, and Vinay Goel)

Interplanetary WayBack (Sawood Alam and Mat Kelly)

"Who will archive the archives?"

To answer this question Sawood Alam and Mat Kelly presented the archiving and replay system called Interplanetary Wayback (ipwb). In a nutshell, during the indexing process ipwb consumes WARC files one record a time, splits the record into headers and payload, pushes the two pieces into the IPFS (a peer‐to‐peer file system) network for persistent storage, and stores the references (digests) into to file format called CDXJ along with some other lookup keys and metadata. For replay it it finds the records in the index file and builds the response by assembling headers and payload retrieved from the IPFS network and performing necessary rewrites. The major benefits of this system include deduplication, redundancy, and shared open access.

Surveillance of First Nations (Evan Light, Katherine Cook, Todd Suomela, and Richard Rath)

Nuage (Petra Galuscakova, Neha Gupta, Rosa Iris R. Rovira, Nathalie Casemajor, Sylvain Rocheleau, Ryan Deschamps, and Ruqin Ren)

Graph‐X‐Graphics (Jeremy Wiebe, Eric Oosenbrug, and Shane Martin)

Tracking Discourse in Social Media (Tom Smyth, Allison Hegel, Alexander Nwala, Patrick Egan, Nick Ruest, Yu Xu, Kelsey Utne, Jonathan Armoza, and Federico Nanni)

This team processed ~11.2 million tweets and ~50 million reddit comments which referenced the Charlie Hebdo and Bataclan attacks, in an effort to track the evolution of social media commentary about the attacks. The team sought to measure the attention span, information/misinformation flow, as well as the co-occurence network of terms in order to understand the dynamics of commentary about these events.

The votes were tallied and Nuage team got the most votes, and were declared winners. The event concluded after some closing remarks.

— Neha Gupta (@archaeomap) March 5, 2016

-- Nwala (@acnwala)

Comments

Post a Comment